Service Mesh - Introduction

As a development team matures and moves across various stages of code organization and system design, the deployment layout also adapts. Change can be a function of product growth, team size changes, technology decisions, or a combination.

General progression is from a monolith to handful of homogenous microservices. As the product gets diverse and team size grows, these microservices become heterogeneous. They can use different languages, servers, API end points, etc.

An explosion in microservices requires each team to deal with the same set of issues and service meshes provides a non-intrusive albeit expensive way to solve them.

What is a service mesh

Wikipedia describes it as:

In software architecture, a service mesh is a dedicated infrastructure layer for facilitating service-to-service communications between microservices, often using a sidecar proxy.

A sidecar proxy is an application design pattern which abstracts certain features, such as inter-service communications, monitoring and security, away from the main architecture to ease the tracking and maintenance of the application as a whole.

A sidecar will sit on the same node (or pod) as the service instance and externalize certain network traffic related functionality.

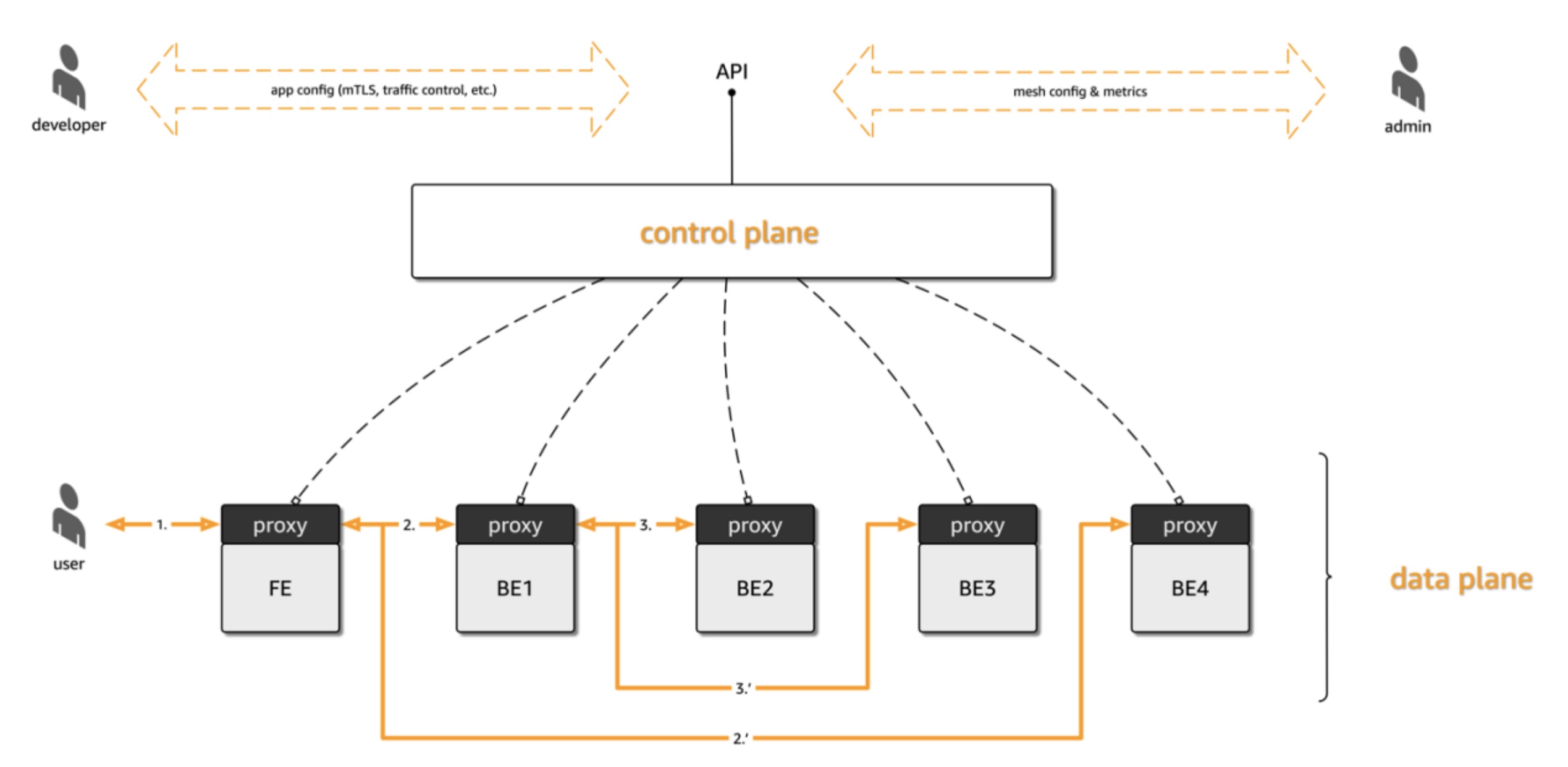

In practice, it would look something like the architecture below:

Having such a dedicated communication layer can provide a number of benefits, such as providing observability into communications, providing secure connections, or automating retries and backoff for failed requests.

Key benefits

Service meshes provide 3 key benefits to the services they connect with

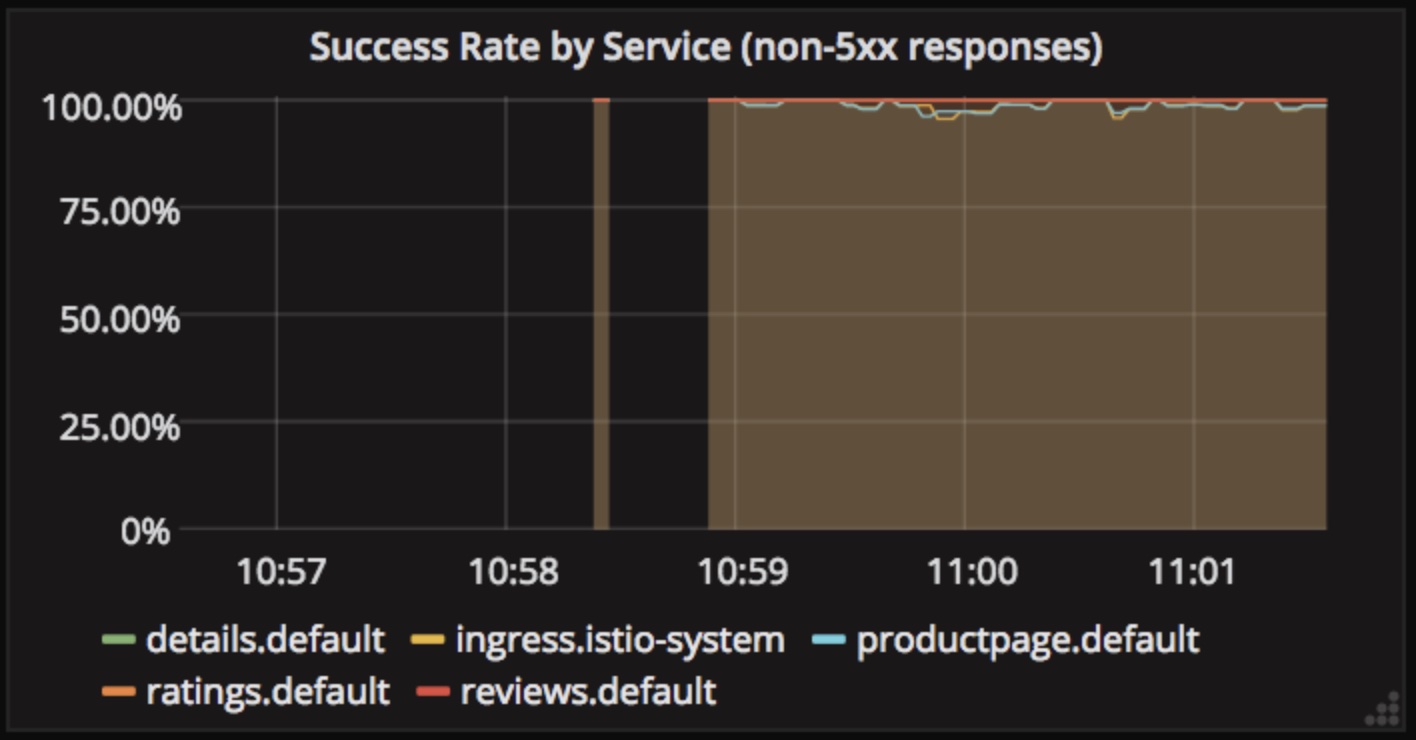

Telemetry

Microservices distribute the fabric of the application into manageable pieces. However, the service landscape behaves as a single unit. Data passes across the service landscape to serve a single request. The ability to see the data for the same request across services as a single unit is desirable for debugging, troubleshooting, and analysis. This is called observability.

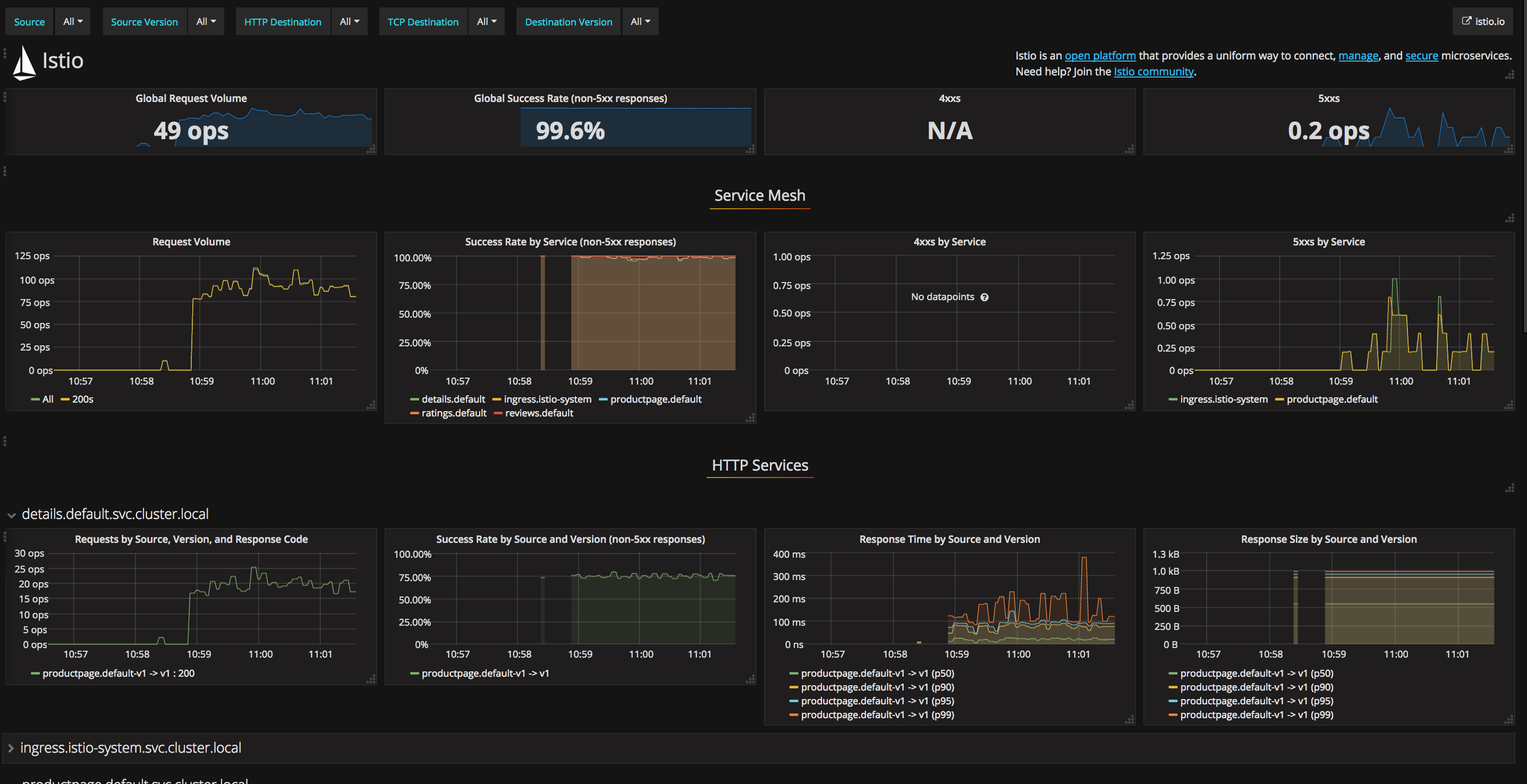

Service mesh intercepts all traffic for the service and hence is in a unique position to provide telemetry data to a central server for each request. Visualization dashboards can be connected with the telemetry data to get an x-ray of the service performance at a granular level.

Full dashboard:

Traffic Control

Service mesh use proxies such as Envoy to intelligently route traffic. Traffic routing allows interesting use-cases such as:

- A/B Testing

- Canary releases

- Load/Latency based traffic handling

- Circuit breaking

- Retries

- Timeouts

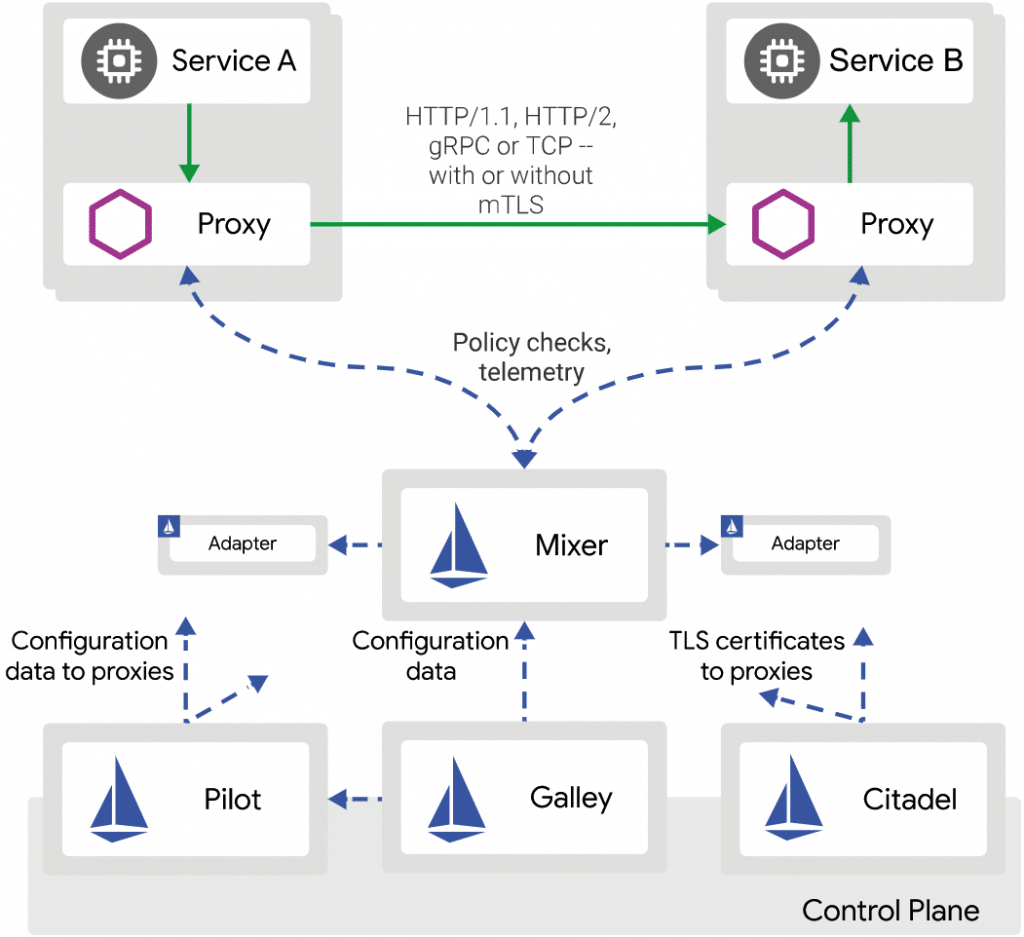

Below is the traffic route architecture for Istio. It contains Istio specific components but gives a general idea about how traffic routing works with service mesh.

The most important thing to remember about service mesh vis-a-vis traffic control is that, service mesh features are destination oriented. In other words, service meshes are well suited to balance individual calls across a number of destination instances, but rather unsuitable to control traffic from a number of sources to an individual destination or to control traffic across an entire service landscape, for that matter.

Because service mesh control extends from Layer 4 into Layer 5 and above, some also offer development teams the ability to implement resiliency patterns like retries, timeouts and deadlines as well as more advanced patterns like circuit breaking, canary releases, and A/B releases.

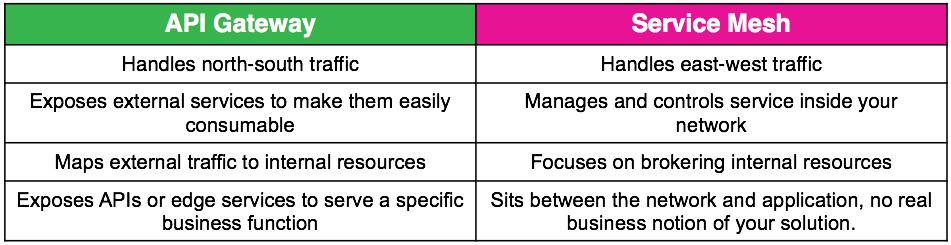

Service mesh focuses on east-west network rather than north-south. This means external communication or providing endpoints for external consumption is still left to the traditional load balancers and API gateways.

Policy Enforcement

A service mesh also supports the implementation and enforcement of cross cutting security requirements, such as providing service identity (via x509 certificates), enabling application-level service/network segmentation (e.g. “service A” can communicate with “service B”, but not “service C”) ensuring all communication is encrypted (via TLS), and ensuring the presence of valid user-level identity tokens or “passports.”

Challenges

Service meshes have their own challenges, both technical and business.

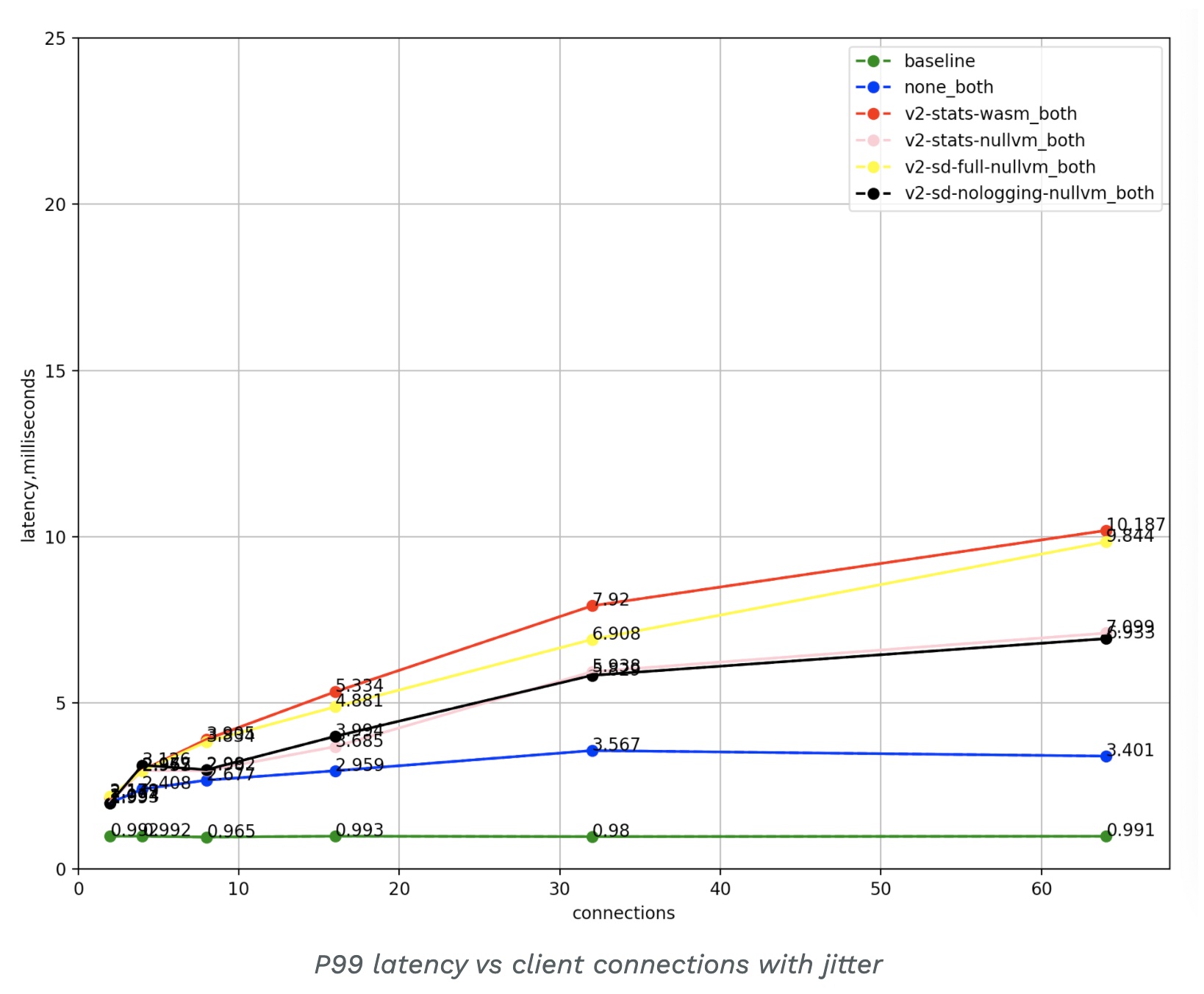

Latency and cost

Due to the added layers of management, performance can suffer in some cases. Case in point, Istio publishes its own latency benchmarks as opposed to non service mesh deployments. You can read more about it at Istio Performance and Scalability

Smaller setups

If you have a small team or set of microservices, service mesh might be an overkill. Alternatives include:

- An in-process solution, a library such as Netflix Hystrix or Twitter Finagle might be a more appropriate and effective way to address your service communication pain points.

- There’s little need to abstract the details away, hence a service mesh might be an overkill.

- You might want to avoid the added complexity of sidecar proxies (costs, debugging, etc.) making introducing a service mesh too early in the process might be counterproductive.

- Immaturity of the technology in general, lack of hands-on experience, or size of the community behind solutions.

Options

Popular service meshes include:

-

Istio Istio is an extensible open-source service mesh built on Envoy, allowing teams to connect, secure, control, and observe services. Open-sourced in 2017, Istio is an ongoing collaboration between IBM and Google, which contributed the original components, as well as Lyft, which donated Envoy in 2017 to the Cloud Native Computing Foundation.

-

Linkerd Linkerd is an “ultralight, security-first service mesh for Kubernetes,” according to the website. It’s a developer favorite, with incredibly easy setup (purportedly 60 seconds to install to a Kubernetes cluster). Instead of Envoy, Linkerd uses a fast and lean Rust proxy called linkerd2-proxy, which was built explicitly for Linkerd.

-

Consul Connect Consul Connect, the service mesh from HashiCorp, focuses on routing and segmentation, providing service-to-service networking features through an application-level sidecar proxy. Consult Connect emphasizes application security, with proxies offering mutual Transport Layer Security (TLS) connections to applications for authorization and encryption.

-

Kuma Kuma, from Kong, prides itself on being a usable service mesh alternative. Kuma is a platform-agnostic control plane built on Envoy. Kuma provides networking features to secure, observe, route, and enhance connectivity between services. Kuma supports Kubernetes in addition to virtual machines.

-

Maesh Maesh, the container-native service mesh by Containous, bills itself as lightweight and more straightforward to use than other service meshes on the market. While other meshes build on top of Envoy, Maesh adopts Traefik, an open-source reverse proxy and load balancer.

Supporting technologies within this space include: Layer 7-aware proxies, such as Envoy, HAProxy, NGINX, and MOSN; and service mesh orchestration, visualization, and understandability tooling, such as SuperGloo, Kiali, and Dive.

Glossary

API gateway: Manages all ingress (north-south) traffic into a cluster, and provides additional. It acts as the single entry point into a system and enables multiple APIs or services to act cohesively and provide a uniform experience to the user.

Consul: A Go-based service mesh from HashiCorp.

Control plane: Takes all the individual instances of the data plane (proxies) and turns them into a distributed system that can be visualized and controlled by an operator.

Data plane: A proxy that conditionally translates, forwards, and observes every network packet that flows to and from a service network endpoint.

East-West traffic: Network traffic within a data center, network, or Kubernetes cluster. Traditional network diagrams were drawn with the service-to-service (inter-data center) traffic flowing from left to right (east to west) in the diagrams.

Envoy Proxy: An open-source edge and service proxy, designed for cloud-native applications. Envoy is often used as the data plane within a service mesh implementation.

Ingress traffic: Network traffic that originates from outside the data center, network, or Kubernetes cluster.

Istio: C++ (data plane) and Go (control plane)-based service mesh that was originally created by Google and IBM in partnership with the Envoy team from Lyft.

Kubernetes: A CNCF-hosted container orchestration and scheduling framework that originated from Google.

Kuma: A Go-based service mesh from Kong.

Linkerd: A Rust (data plane) and Go (control plane) powered service mesh that was derived from an early JVM-based communication framework at Twitter.

Maesh: A Go-based service mesh from Containous, the maintainers of the Traefik API gateway.

MOSN: A Go-based proxy from the Ant Financial team that implements the (Envoy) xDS APIs.

North-South traffic: Network traffic entering (or ingressing) into a data center, network, or Kubernetes cluster. Traditional network diagrams were drawn with the ingress traffic entering the data center at the top of the page and flowing down (north to south) into the network.

Proxy: A software system that acts as an intermediary between endpoint components.

Segmentation: Dividing a network or cluster into multiple sub-networks.

Service mesh: Manages all service-to-service (east-west) traffic within a distributed (potentially microservice-based) software system. It provides both functional operations, such as routing, and nonfunctional support, for example, enforcing security policies, quality of service, and rate limiting.

Service Mesh Interface (SMI): A work-in-progress standard interface for service meshes deployed onto Kubernetes.

Service mesh policy: A specification of how a collection of services/endpoints are allowed to communicate with each other and other network endpoints.

Sidecar: A deployment pattern, in which an additional process, service, or container is deployed alongside an existing service (think motorcycle sidecar).

Single pane of glass: A UI or management console that presents data from multiple sources in a unified display.

Traffic shaping: Modifying the flow of traffic across a network, for example, rate limiting or load shedding.

Traffic shifting: Migrating traffic from one location to another.

Arif Amirani

Arif Amirani